GPU Memory Bandwidth: The Critical Bottleneck Limiting Modern Graphics Card Performance

Graphics card discussions usually revolve around core counts, clock speeds, and raw computational power. Marketing materials reinforce this perspective, highlighting shader units, boost frequencies, and architectural improvements. Yet beneath these headline specifications lies a constraint that frequently dictates real-world behavior: GPU Memory Bandwidth. While often overlooked, bandwidth limitations can silently shape frame delivery, texture handling, and rendering stability even on otherwise powerful hardware.

Modern GPUs operate in an environment defined by data movement. Every frame rendered requires constant transfers of textures, geometry data, shading instructions, and intermediate results between processing cores and video memory. The efficiency of this exchange is not determined solely by memory capacity. Instead, the rate at which data can be delivered becomes a decisive factor. This is where GPU Memory Bandwidth emerges as one of the most influential yet misunderstood aspects of graphics performance.

Performance Beyond Raw Compute Power

In theoretical scenarios, a graphics processor may possess enormous computational throughput. Thousands of parallel cores can execute complex shading operations at remarkable speeds. However, computational capability alone does not guarantee consistent output. Rendering workloads are data-hungry, and processing units cannot operate efficiently if memory subsystems fail to supply information quickly enough. In such cases, the GPU transitions from being compute-bound to bandwidth-bound.

This shift explains a common paradox observed by enthusiasts: a graphics card with superior core specifications occasionally performs similarly to weaker alternatives. When workloads depend heavily on memory transactions — such as high-resolution textures or advanced lighting calculations — insufficient GPU Memory Bandwidth can restrict the entire pipeline. Processing units remain underutilized, waiting for data rather than executing instructions.

The Mechanics of Memory Throughput

Bandwidth describes the volume of data transferred between GPU cores and VRAM within a given timeframe. It is governed by multiple hardware characteristics, including memory clock speed, bus width, and memory technology. Wider buses allow more data to travel simultaneously, while faster memory increases transfer frequency. Together, these elements define the ceiling of GPU Memory Bandwidth.

This throughput determines how efficiently textures, frame buffers, shadow maps, and compute data circulate through the rendering process. As graphical fidelity rises, data requirements expand dramatically. High-resolution assets, complex shaders, and real-time ray tracing intensify pressure on memory channels. Without adequate bandwidth, delays propagate across the pipeline, producing performance behaviors that cannot be fully explained by GPU compute metrics alone.

Resolution Scaling and Data Pressure

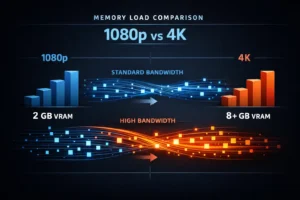

Display resolution fundamentally alters memory demands. Increasing pixel count does not simply multiply shading work; it expands the volume of memory transactions. Larger frame buffers, more detailed textures, and greater intermediate storage requirements elevate dependency on GPU Memory Bandwidth. At higher resolutions, the GPU must continuously read and write substantially more data per frame.

This dynamic explains why certain graphics cards demonstrate strong results at 1080p yet struggle at 1440p or 4K. The computational workload may remain within the processor’s capability, but memory subsystems encounter saturation. Bandwidth constraints begin to dominate performance outcomes, revealing a bottleneck invisible in simplified spec comparisons.

Texture Streaming and Stability

Contemporary game engines rely heavily on texture streaming systems designed to load assets dynamically. Instead of storing all resources permanently in memory, engines continuously transfer texture data based on scene requirements. These operations demand rapid memory access, making GPU Memory Bandwidth critical for maintaining visual consistency.

When bandwidth capacity falls short, streaming operations may compete with rendering tasks for memory access. The result can manifest as delayed texture updates, transient stutters, or inconsistent frame pacing. These symptoms often resemble other performance issues, leading users to misattribute their origin. In reality, memory throughput limitations frequently underpin such anomalies.

Frame delivery irregularities are sometimes discussed in broader performance analyses, including concepts explored in Frame Time Consistency: The Invisible Factor Behind Truly Smooth Gaming. While frame pacing involves multiple variables, bandwidth constraints represent a subtle yet impactful contributor to instability.

Memory Bandwidth vs Memory Capacity

A widespread misconception equates VRAM size with overall memory performance. Capacity determines how much data can be stored locally, but it does not govern how quickly that data can be accessed. A GPU may possess abundant memory yet encounter throughput limitations if bandwidth remains restricted. Conversely, moderate capacity paired with high bandwidth can sustain smoother data flow under demanding workloads.

This distinction becomes increasingly important as game assets grow in complexity. High-resolution textures and advanced rendering techniques expand both storage and transfer requirements. Systems emphasizing capacity alone risk neglecting the performance implications of GPU Memory Bandwidth, particularly in memory-intensive scenarios.

Architectural Evolution and Bandwidth Demands

GPU architectures have evolved to accommodate escalating graphical expectations. Features such as physically based rendering, volumetric effects, and ray tracing intensify memory interactions. These technologies increase not only computational load but also memory traffic. Each advancement amplifies reliance on efficient data delivery mechanisms.

Hardware designers address these challenges through innovations including faster memory standards, wider buses, and sophisticated cache hierarchies. Despite these improvements, bandwidth constraints persist as a defining limitation. Even cutting-edge GPUs must balance processing capability against memory throughput realities.

Thermal behavior can further complicate performance analysis. When memory or core frequencies fluctuate due to heat management, effective GPU Memory Bandwidth may vary. Broader performance degradation mechanisms are discussed in GPU Thermal Throttling: The Silent Performance Killer in Modern Graphics Cards, highlighting how multiple subsystems interact under load.

Understanding the Invisible Constraint

Bandwidth limitations rarely produce dramatic failure states. Instead, they introduce subtle inefficiencies that accumulate across rendering workloads. Slight delays in data delivery can propagate into measurable frame instability, particularly under high-resolution or memory-intensive conditions. These effects are often misinterpreted because traditional performance metrics emphasize compute characteristics.

A comprehensive evaluation of graphics performance must therefore account for GPU Memory Bandwidth alongside processing power. Ignoring memory throughput risks incomplete conclusions, especially when analyzing behavior in modern rendering environments defined by constant data exchange.

Bandwidth Bottlenecks in Real Workloads

Bandwidth constraints become most visible when rendering complexity and data volume intersect. Synthetic benchmarks may suggest strong GPU capability, yet real workloads often tell a different story. Open-world environments, high-resolution texture packs, and ray tracing pipelines impose sustained pressure on memory channels. Under these conditions, GPU Memory Bandwidth frequently emerges as the dominant limiting factor.

Ray tracing provides a clear example of bandwidth sensitivity. Unlike traditional rasterization, ray tracing algorithms generate substantial memory traffic. Acceleration structures, traversal data, and lighting information require continuous memory access. Even GPUs with impressive compute resources may encounter throughput saturation if memory subsystems cannot keep pace.

Cache Hierarchies and Bandwidth Mitigation

Modern GPU designs incorporate advanced caching strategies to alleviate bandwidth pressure. On-chip caches reduce dependency on external VRAM by storing frequently accessed data closer to processing units. Effective cache utilization can dramatically influence perceived GPU Memory Bandwidth, minimizing latency and improving efficiency.

However, caches are not a universal remedy. Their effectiveness depends on workload characteristics and data locality. Highly dynamic scenes or large datasets may exceed cache capacity, redirecting pressure back to memory channels. In such cases, raw bandwidth once again dictates performance stability.

Memory Bus Width and Its Practical Impact

Memory bus width directly shapes throughput potential. Wider buses enable parallel data transfers, increasing total bandwidth. GPUs positioned in higher performance tiers often feature broader memory interfaces, reflecting the growing importance of GPU Memory Bandwidth in demanding scenarios.

Narrower buses, even when paired with fast memory modules, can introduce structural limitations. This configuration may function adequately for moderate workloads but struggle under high-resolution or data-intensive tasks. The resulting bottleneck is architectural rather than computational.

Bandwidth Sensitivity Across Game Genres

Different rendering profiles exhibit varying bandwidth dependencies. Competitive titles optimized for high frame rates may emphasize compute efficiency, while visually rich experiences often stress memory subsystems. High-fidelity assets, complex shading models, and expansive environments magnify reliance on GPU Memory Bandwidth.

This variation explains why GPU performance can fluctuate dramatically between applications. A graphics card delivering excellent results in one title may reveal limitations in another due to differing memory transaction patterns. Bandwidth behavior thus becomes context-dependent rather than universally predictable.

Common Purchasing Misjudgments

Consumer decisions frequently prioritize core counts and advertised performance metrics. Memory characteristics receive comparatively less attention despite their substantial impact. Overlooking GPU Memory Bandwidth can lead to mismatched hardware selections, particularly for users targeting high resolutions or memory-heavy workloads.

Balanced system design requires acknowledging that compute power and memory throughput function as interdependent components. Disproportionate emphasis on either aspect may yield suboptimal results. Bandwidth awareness therefore plays a critical role in realistic performance expectations.

External Validation and Technical References

Technical analyses from independent sources consistently highlight memory subsystem influence. Detailed architectural examinations and empirical testing reinforce the significance of bandwidth in modern GPU behavior. Authoritative references such as TechPowerUp GPU Database and research resources from AnandTech provide valuable insights into how memory configurations correlate with observed performance.

Reframing GPU Performance Evaluation

Bandwidth considerations reshape how graphics hardware should be assessed. Rather than viewing GPUs solely through computational metrics, a broader perspective recognizes memory throughput as a defining constraint. GPU Memory Bandwidth influences frame stability, asset handling, and rendering efficiency across diverse workloads.

Understanding this dynamic enables more accurate interpretation of performance anomalies and hardware behavior. It also clarifies why certain architectural choices yield tangible advantages despite similar compute specifications. Bandwidth is not merely a supporting metric — it is a foundational determinant of modern graphics performance.

Conclusion

The evolution of graphics technology continues to intensify memory demands. Visual fidelity, rendering complexity, and real-time effects all expand reliance on efficient data movement. Within this landscape, GPU Memory Bandwidth stands as one of the most consequential yet underappreciated aspects of GPU architecture.

Recognizing bandwidth limitations provides critical context for understanding real-world performance behavior. It reveals bottlenecks invisible in simplified comparisons and highlights the importance of balanced hardware design. As rendering workloads grow increasingly data-intensive, bandwidth awareness becomes essential for both enthusiasts and everyday users seeking consistent graphics performance.