Game Engine Thread Scheduling Systems: CPU Task Management Technology Behind Stable Performance in Modern Games

Modern video games rely on extremely complex processing pipelines that must run in real time without interruption. As game worlds become larger, physics simulations more detailed, and rendering systems more advanced, the workload placed on the CPU grows dramatically. To keep performance stable, modern engines depend on a highly specialized system known as Game Engine Thread Scheduling. This technology controls how tasks are distributed across CPU cores and ensures that every system inside the engine runs at the right time without causing slowdowns, stutter, or frame drops.

Unlike older game engines that executed most logic in a single thread, modern engines divide their workload into dozens of parallel tasks. Rendering, physics, animation, AI, audio, streaming, and scripting may all run simultaneously. Without an intelligent scheduling system, these tasks would compete for CPU time, leading to unstable performance. The purpose of Game Engine Thread Scheduling is to coordinate these operations efficiently while keeping frame times consistent, which is essential for smooth gameplay.

As hardware evolved from single-core processors to multi-core and multi-threaded architectures, engine developers were forced to redesign the internal structure of their engines. Today, almost every modern engine uses advanced scheduling strategies to fully utilize available CPU resources. These systems are not visible to players, but they play a critical role in maintaining performance stability, especially in large open-world titles where thousands of operations must be processed every frame.

The Role of CPU Task Management in Modern Game Engines

Every frame rendered by a game requires multiple subsystems to work together in a precise order. The engine must update physics simulations, process player input, run AI logic, stream assets, update animations, and prepare rendering commands before the GPU can draw the final image. If any of these steps take too long, the entire frame is delayed. This is why Game Engine Thread Scheduling is designed to balance workload across multiple cores instead of relying on a single execution path.

In early game engines, most of the logic ran sequentially. While this approach was simple, it limited performance because only one CPU core could be used at a time. Modern engines solve this problem by splitting work into small jobs that can run in parallel. The scheduler then decides which thread should execute each job and when it should run. This allows the engine to keep all CPU cores busy without overloading any single one.

The importance of efficient scheduling becomes even more clear in large-scale environments. Technologies such as

Game Asset Streaming systems

constantly load and unload world data while the player moves. Without proper thread management, streaming operations could block gameplay logic and cause visible stutter. By using Game Engine Thread Scheduling, engines ensure that background tasks never interrupt critical gameplay updates.

How Multi-Threaded Architecture Changed Game Engine Design

The shift to multi-core processors forced developers to rethink how engines are structured internally. Instead of running one large update loop, modern engines divide the frame into many independent tasks. Each task can run on a different thread, allowing the engine to process more data in less time. This architecture requires a sophisticated scheduling system capable of tracking dependencies between tasks and executing them in the correct order.

For example, physics simulation must usually be updated before animation, and animation must be updated before rendering. The scheduler ensures that these dependencies are respected while still running as many tasks as possible in parallel. This balance between order and concurrency is one of the most important responsibilities of Game Engine Thread Scheduling.

The need for parallel execution becomes even greater when advanced simulation systems are involved. Modern physics engines, for example, may process hundreds of collisions every frame. Articles discussing

Game Physics Engine Architecture

often highlight how simulation performance depends heavily on CPU task distribution. Without a reliable scheduler, physics calculations could monopolize CPU time and cause frame instability.

In addition to physics, rendering preparation also relies on multi-threading. While the GPU handles drawing, the CPU must prepare command buffers, update scene data, and manage visibility calculations. These operations are usually split into multiple jobs that run across several threads. The scheduler coordinates these jobs so that the GPU always receives data on time.

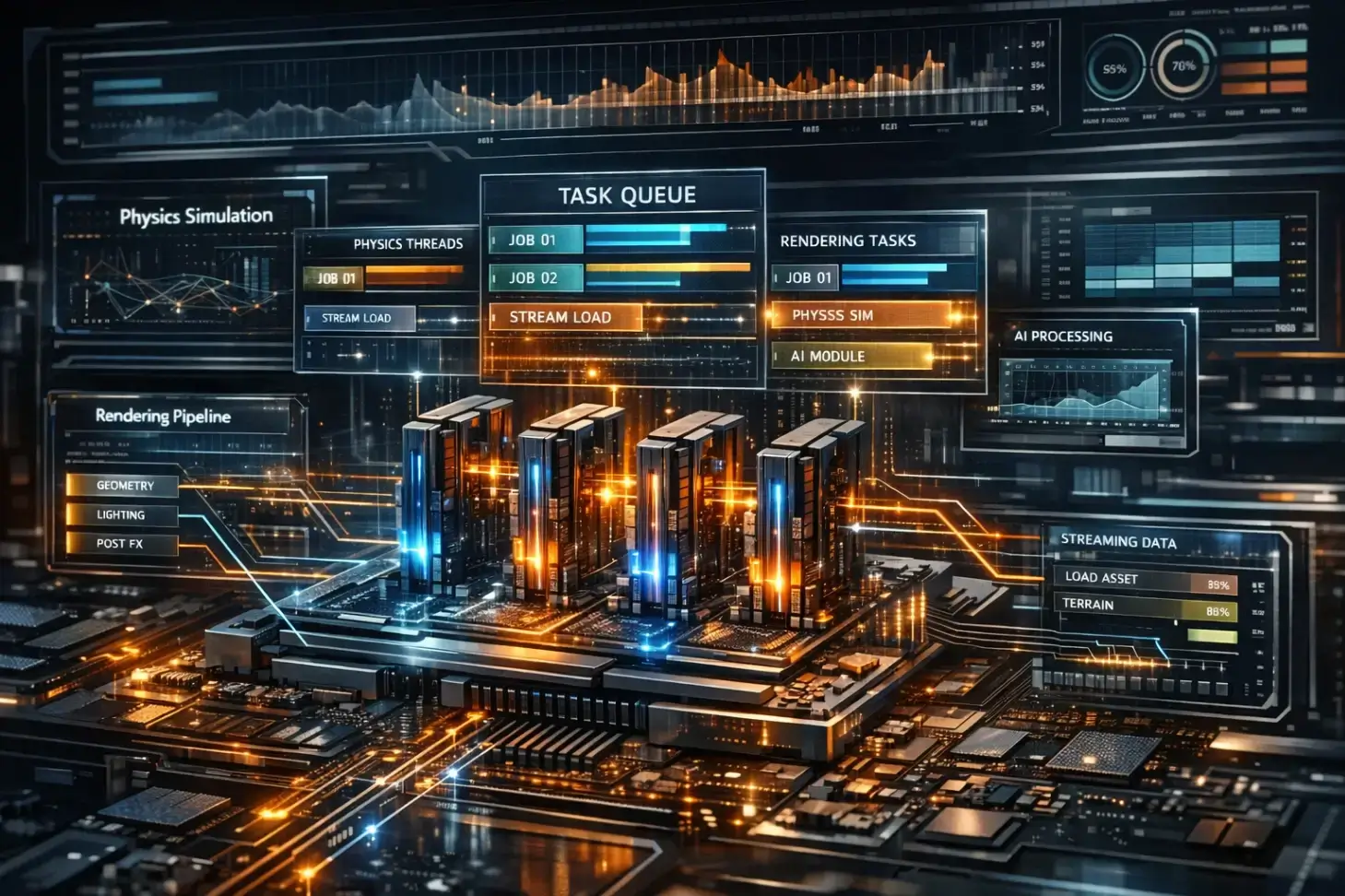

Task Queues, Worker Threads, and Frame Synchronization

Most modern engines implement Game Engine Thread Scheduling using a job-based system. Instead of assigning large tasks to specific threads, the engine breaks work into small units called jobs. These jobs are placed in task queues, and worker threads pull jobs from the queue whenever they become available. This approach keeps all CPU cores active and reduces idle time.

Worker threads usually run in parallel throughout the entire frame. While one thread updates AI, another may process physics, and another may prepare rendering data. Because these jobs are small, the scheduler can quickly move work between threads if one core becomes overloaded. This flexibility is essential for maintaining consistent frame times.

Frame synchronization is another critical responsibility of the scheduler. All tasks required for a frame must finish before the engine can present the final image. If even one task runs late, the frame will be delayed. To prevent this, Game Engine Thread Scheduling carefully monitors execution time and prioritizes critical jobs over background work.

Background operations such as procedural content generation, audio processing, and world streaming often run with lower priority. Systems described in

Procedural Generation Systems in Modern Games

can generate large amounts of data dynamically, but they must not interfere with gameplay updates. The scheduler ensures that these heavy operations run only when CPU time is available.

Load Balancing and CPU Core Utilization

One of the biggest challenges in engine development is keeping all CPU cores busy without creating bottlenecks. If one core is overloaded while others are idle, performance will suffer even if the processor has many threads available. This is why modern engines use dynamic load balancing as part of Game Engine Thread Scheduling.

Dynamic scheduling allows the engine to distribute tasks based on current workload instead of using fixed assignments. When a thread finishes its work, it immediately requests another job from the queue. This prevents idle time and ensures that every core contributes to the frame. The result is more stable performance and better scalability across different hardware configurations.

Load balancing is especially important on consoles and PCs with different CPU architectures. Some systems have fewer cores with higher clock speeds, while others have many slower cores. A well-designed scheduling system adapts automatically to these differences, allowing the same engine to run efficiently on multiple platforms.

Because modern games must support a wide range of hardware, developers spend significant time optimizing Game Engine Thread Scheduling. Even small improvements in task distribution can reduce frame time spikes and improve the overall smoothness of the game.

External References

For deeper technical documentation about multi-threading and engine architecture, developers often refer to official engine resources such as:

Unreal Engine Documentation

and

Unity Engine Technical Resources

.

These sources describe how modern engines implement task systems, worker threads, and frame synchronization to achieve stable real-time performance.

Understanding these concepts makes it clear that Game Engine Thread Scheduling is one of the most important technologies behind modern game stability. Without it, the complexity of today’s rendering systems, physics simulations, and open-world streaming would make consistent frame rates impossible.

In the next part of this article, we will examine advanced scheduling strategies used in modern engines, including priority systems, dependency graphs, async pipelines, and the techniques used to prevent micro-stutter in large-scale games.

Advanced Scheduling Strategies Used in Modern Game Engines

As modern engines became more complex, simple task queues were no longer enough to maintain stable performance. Developers began implementing advanced techniques inside Game Engine Thread Scheduling to handle thousands of small tasks per frame without creating delays. These techniques allow the engine to control execution order, prioritize critical operations, and prevent CPU spikes that could cause visible stutter during gameplay.

One of the most important improvements in Game Engine Thread Scheduling is the use of dependency graphs. Instead of running tasks in a fixed order, the engine builds a graph that describes which tasks depend on others. The scheduler then executes any job that has no pending dependency, allowing multiple systems to run in parallel while still preserving correct results.

Dependency-based scheduling is especially useful in large scenes where rendering, physics, animation, and streaming must all interact. Without a dependency system, the engine would need to run many tasks sequentially, reducing performance even on powerful CPUs. With modern Game Engine Thread Scheduling, these tasks can overlap safely, reducing frame time and improving stability.

Priority Systems and Critical Frame Tasks

Not all engine tasks have the same importance. Some operations must finish before the frame ends, while others can be delayed without affecting gameplay. For this reason, priority management is a core feature of modern Game Engine Thread Scheduling. The scheduler assigns different priority levels to tasks and ensures that critical jobs always run first.

Input processing, physics updates, and rendering preparation usually have the highest priority because they directly affect what the player sees. Background operations such as audio streaming or asset loading often run with lower priority. By controlling execution order, Game Engine Thread Scheduling prevents heavy background work from interrupting gameplay.

Priority systems also help reduce micro-stutter, which happens when a frame takes longer than expected. If the scheduler detects that the frame budget is almost exceeded, it can delay low-priority tasks and finish only the essential ones. This dynamic behavior allows modern engines to maintain smooth frame pacing even during intense scenes.

Asynchronous Pipelines and Parallel Frame Processing

Another major advancement in Game Engine Thread Scheduling is the use of asynchronous pipelines. Instead of completing all work for one frame before starting the next, the engine processes multiple frames in different stages at the same time. While one frame is being rendered, another may be updating physics, and a third may be preparing streaming data.

This pipeline approach allows better CPU utilization because threads are never idle. However, it also increases complexity because tasks from different frames must not interfere with each other. Modern Game Engine Thread Scheduling systems track frame boundaries carefully to ensure that data is synchronized correctly.

Asynchronous execution is particularly important in large open-world games where asset streaming, AI updates, and environment simulation continue even when the player is not looking at them. Without a strong scheduling system, these background operations could easily overload the CPU.

Preventing Frame Time Spikes and Micro-Stutter

One of the main goals of Game Engine Thread Scheduling is to keep frame times consistent. Players usually notice performance problems not when the average frame rate drops, but when individual frames take too long to render. These spikes often happen when too many tasks run at the same time or when a single thread becomes overloaded.

To avoid this problem, modern schedulers constantly monitor execution time. If a task takes longer than expected, the system can redistribute work to other threads or delay non-critical jobs. This adaptive behavior is a key reason why modern engines feel smoother even when running very complex scenes.

Frame pacing is especially important in competitive games, where even small stutters can affect player input and camera movement. Because of this, developers spend a significant amount of time optimizing Game Engine Thread Scheduling to guarantee predictable performance under all conditions.

Scalability Across Different Hardware Architectures

Modern games must run on a wide range of hardware, from low-power laptops to high-end gaming PCs and consoles. Each system has a different number of CPU cores, different cache sizes, and different thread performance characteristics. A fixed scheduling system would not work efficiently on all of them, which is why scalable Game Engine Thread Scheduling is essential.

Scalable schedulers detect how many cores are available and adjust the number of worker threads automatically. On systems with many cores, the engine creates more parallel jobs. On systems with fewer cores, tasks are merged to reduce overhead. This flexibility allows the same engine to maintain stable performance on multiple platforms.

Console development benefits greatly from this approach because hardware specifications are fixed, allowing developers to fine-tune Game Engine Thread Scheduling for maximum efficiency. On PC, the scheduler must be more dynamic because the engine cannot predict the exact CPU configuration.

Synchronization, Barriers, and Safe Data Access

Running many threads at the same time introduces another challenge: data synchronization. When multiple tasks access the same data, the engine must ensure that the results remain correct. Modern Game Engine Thread Scheduling uses synchronization barriers, locks, and atomic operations to prevent conflicts between threads.

Barriers are often used at the end of major frame stages. For example, physics must finish before animation begins, and animation must finish before rendering starts. The scheduler waits until all required jobs are complete before moving to the next stage. This guarantees stability without sacrificing parallel performance.

Efficient synchronization is difficult to design, but it is necessary for large engines that run thousands of tasks every second. Poor synchronization can cause crashes, visual errors, or inconsistent gameplay. Because of this, Game Engine Thread Scheduling is considered one of the most technically demanding parts of engine architecture.

Future Directions of Thread Scheduling Technology

As CPUs continue to increase core counts, scheduling systems will become even more important. Future engines will likely rely on more advanced forms of Game Engine Thread Scheduling that use predictive algorithms and workload analysis to decide how tasks should run. Some experimental engines already use task graphs that change dynamically based on player actions.

Another trend is closer integration between CPU scheduling and GPU pipelines. Modern graphics APIs allow asynchronous compute, which means the CPU scheduler must coordinate with the GPU to avoid bottlenecks. This makes Game Engine Thread Scheduling not only a CPU feature, but part of the entire rendering architecture.

As game worlds become more detailed and simulations more complex, the importance of efficient scheduling will continue to grow. Stable performance in modern games depends not only on powerful hardware, but on the intelligent systems that manage how that hardware is used.