CPU Cache Performance: The Hidden Force Shaping Frame Stability and Gaming Smoothness

Modern gaming discussions tend to orbit around familiar metrics: graphics cards, clock speeds, memory bandwidth, and frame rates. These are visible, marketable, and easy to quantify. Yet beneath the surface of every smooth frame and every responsive input lies a less discussed component that frequently determines how a system truly behaves: CPU cache performance. While gamers often compare core counts or boost frequencies, cache architecture quietly influences frame pacing, asset processing, and simulation consistency in ways that raw specifications alone cannot explain.

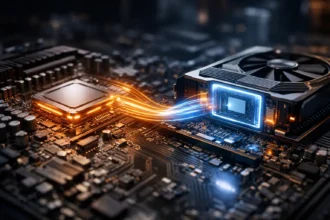

At its core, cache exists to solve a fundamental problem of computing latency. Main system memory, despite being incredibly fast by historical standards, is still slow relative to the operating speed of a processor. A modern CPU can execute billions of operations per second, but accessing data from RAM introduces delays large enough to stall execution pipelines. Cache mitigates this mismatch by storing frequently accessed data closer to the execution units, drastically reducing effective latency. When analyzing CPU cache performance, one is essentially examining how efficiently a processor feeds itself the data required to maintain continuous work without interruption.

Latency Hierarchies Inside the Processor

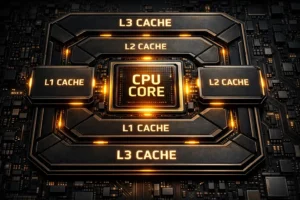

CPU cache is structured as a hierarchy rather than a single pool of memory. Each level serves a distinct purpose. L1 cache prioritizes extreme speed with minimal capacity. L2 cache balances speed with moderate size. L3 cache, typically shared across cores, provides larger storage but slightly higher latency. This layered design reflects a central truth: proximity to execution logic directly affects performance characteristics. CPU cache performance is therefore not just about size, but about how effectively these layers cooperate under dynamic workloads such as those generated by modern games.

Gaming workloads differ dramatically from synthetic benchmarks. Games involve continuous simulation, unpredictable player input, streaming assets, physics calculations, and AI logic — all of which generate constantly shifting data access patterns. Unlike linear computational tasks, games frequently revisit small chunks of data while also introducing bursts of new information. Efficient caching ensures that high-priority data remains accessible without expensive memory fetches. When CPU cache performance is strong, frame generation becomes more consistent because execution units spend less time waiting and more time processing.

Frame Stability Beyond Raw FPS

Frame rate metrics alone rarely capture the full experience of gameplay smoothness. Two systems delivering identical average FPS can feel dramatically different. Variations in frame delivery times, micro-stutters, and irregular pacing often stem from CPU behavior rather than GPU throughput. Cache efficiency influences this behavior directly. When critical simulation data remains resident in low-latency cache, the CPU maintains steady processing cycles. When cache misses occur frequently, memory fetch delays accumulate, creating irregular execution timing that manifests as frame instability.

This connection between CPU cache performance and frame consistency is particularly important in simulation-heavy games. Titles involving complex world states, dynamic entities, or extensive AI logic rely on rapid access to structured data sets. Cache-friendly data locality minimizes disruptions. Poor locality, by contrast, forces repeated memory retrieval, introducing latency spikes. The result is not necessarily lower FPS, but uneven frame pacing — a phenomenon many players recognize but struggle to attribute to specific hardware characteristics.

Data Locality and Game Engine Behavior

Modern game engines are designed around large volumes of structured data: entity lists, physics states, rendering commands, animation parameters, and streaming buffers. The arrangement of this data strongly influences CPU cache performance. Sequential, predictable access patterns promote cache hits. Fragmented or scattered access patterns increase cache misses. Engines optimized for data locality often produce smoother gameplay not because of higher computational power, but because they align more effectively with processor memory hierarchies.

This distinction explains why different games stress processors in different ways. Some engines prioritize parallel workloads with predictable data reuse. Others generate irregular patterns that challenge cache efficiency. In both cases, CPU cache performance becomes a defining factor in how consistently frames are produced. Importantly, this behavior is independent of graphical complexity. Even visually simple scenes can create heavy CPU pressure if underlying data flows disrupt cache coherency.

Core Counts Versus Cache Efficiency

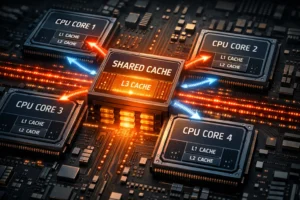

A persistent misconception in gaming hardware discussions equates more cores with universally better performance. While additional cores expand theoretical parallel capacity, they do not guarantee improved results. Cache behavior complicates this assumption. Shared cache resources, inter-core communication, and thread scheduling overhead all shape effective performance. CPU cache performance often dictates whether extra cores translate into meaningful gains or simply introduce coordination penalties.

Certain gaming workloads remain latency-sensitive rather than throughput-bound. For these tasks, rapid access to cached data outweighs raw parallelism. Processors with well-balanced cache hierarchies frequently outperform higher-core alternatives in real-world gaming scenarios. This outcome challenges traditional upgrade logic but reflects the nuanced reality of game engine execution.

Understanding CPU cache performance thus requires shifting perspective away from headline specifications toward architectural efficiency. Smooth gaming experiences depend on continuous data availability, predictable execution timing, and minimal latency disruptions. Cache systems sit at the center of this balance, shaping system responsiveness in subtle yet decisive ways.

Cache Contention and Multithreaded Workloads

As game engines increasingly adopt multithreaded designs, cache dynamics grow more complex. Threads executing on separate cores may compete for shared cache resources, especially at the L3 level. This contention can introduce variability even when overall CPU utilization appears low. CPU cache performance in such contexts depends not only on cache size but also on replacement policies, coherency mechanisms, and scheduling behavior. Efficient designs minimize destructive interference between threads, preserving predictable access latency.

Cache contention effects become visible during scenes involving heavy simulation bursts, asset streaming transitions, or large-scale entity updates. While GPUs handle rendering tasks, CPUs orchestrate logic, synchronization, and data preparation. When cache thrashing occurs, execution units repeatedly evict and reload data, increasing effective latency. The impact manifests as frame pacing irregularities rather than outright performance collapse, making diagnosis challenging without deeper architectural awareness.

Memory Access Patterns in Real Gameplay

Real gameplay generates diverse memory access patterns. Player movement, camera shifts, physics interactions, and AI decisions continuously reshape working data sets. CPU cache performance reflects how efficiently processors adapt to these shifting patterns. Strong cache hierarchies sustain high hit rates across varying workloads, smoothing transitions between computational phases. Weak hierarchies amplify latency penalties during unpredictable data flows.

This variability explains why benchmark results often diverge from lived gaming experiences. Synthetic tests emphasize steady-state workloads, whereas games operate under fluctuating conditions. Cache efficiency therefore becomes a stabilizing force, ensuring that transient workload changes do not translate into perceptible stutters.

Thermal Dynamics and Cache Behavior

Thermal conditions indirectly influence cache-related performance characteristics. Sustained workloads may trigger frequency adjustments that alter effective memory latency relationships. While discussions of throttling often focus on graphics processors, CPUs exhibit similar adaptive behavior. Maintaining stable operating conditions supports consistent CPU cache performance by preserving predictable execution timing. Readers interested in broader stability considerations may find relevant parallels in GPU Thermal Throttling: The Silent Performance Killer in Modern Graphics Cards.

Frame Time Consistency Revisited

Cache efficiency also intersects with frame time behavior. Irregular memory stalls can produce uneven frame delivery intervals even when average performance appears strong. The perception of smoothness relies on stability rather than peak throughput. This relationship mirrors principles explored in Frame Time Consistency: The Invisible Factor Behind Truly Smooth Gaming, where timing variability proves more impactful than headline frame rates.

Architectural Tradeoffs Across Processors

Processor designs reflect different priorities: cache size, latency optimization, power efficiency, and parallel scalability. CPU cache performance varies accordingly. Some architectures emphasize large shared caches to improve data reuse. Others focus on minimizing latency through aggressive prefetching and scheduling heuristics. Neither approach guarantees universal superiority. Game behavior, engine structure, and workload composition ultimately determine which characteristics deliver tangible benefits.

These tradeoffs complicate simplistic upgrade decisions. Comparing clock speeds or core counts alone overlooks deeper factors governing execution stability. Cache hierarchies, coherency latency, and memory subsystem design frequently shape real-world outcomes more strongly than visible specifications suggest.

External References

For readers seeking deeper technical context, processor memory hierarchies and cache mechanisms are extensively documented in academic and industry resources. Authoritative discussions of cache design and latency behavior can be found at Intel’s Software Developer Manuals and AMD’s Processor Architecture Documentation. These materials provide valuable insights into how hardware structures influence execution dynamics.

Ultimately, CPU cache performance represents one of the most influential yet underappreciated variables in gaming hardware behavior. While marketing narratives spotlight visible metrics, cache efficiency governs the continuity of computation itself. Frame stability, simulation consistency, and responsiveness all emerge from the processor’s ability to access data without interruption. Appreciating this reality refines hardware evaluation, revealing that smooth gameplay often depends less on raw speed and more on architectural harmony.